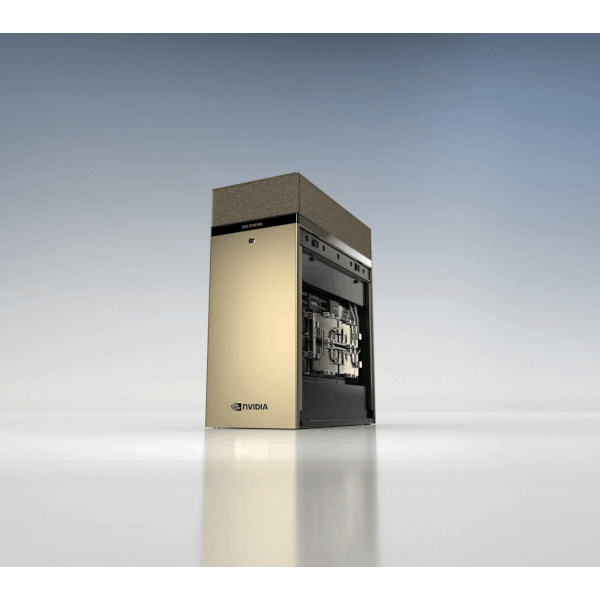

NVIDIA DGX STATION A100 320GB/160GB

- 2.5 petaFLOPS of performance

- World-class AI platform, with no complicated installation or IT help needed

- Server-grade, plug-and-go, and doesn’t require data center power and cooling

- 4 fully interconnected NVIDIA A100 Tensor Core GPUs and up to 320 gigabytes (GB) of GPU memory

About

Data science teams are at the leading edge of innovation, but they’re often left searching for available AI compute cycles to complete projects. They need a dedicated resource that can plug in anywhere and provide maximum performance for multiple, simultaneous users anywhere in the world. NVIDIA DGX Station™ A100 brings AI supercomputing to data science teams, offering data center technology without a data center or additional IT infrastructure. Powerful performance, a fully optimized software stack, and direct access to NVIDIA DGXperts ensure faster time to insights.

Specification

Specification

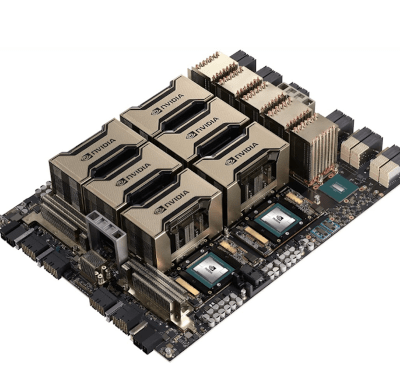

GPUs

4x NVIDIA A100 80 GB/40GB

Performance

2.5 petaFLOPS AI

5 petaOPS INT8

GPU Memory

320 GB total/160 GB total

CPU

Single AMD 7742, 64 cores,

2.25 GHz (base)–3.4 GHz (max boost)

System Memory

512 GB DDR4

Storage

OS: 1x 1.92 TB NVME drive

Internal storage: 7.68 TB

U.2 NVME drive

Network

Dual-port 10Gbase-T Ethernet LAN

Single-port 1Gbase-T Ethernet

BMC management port

DGX Display Adapter

4 GB GPU memory,

4x Mini DisplayPort

Software

Ubuntu Linux OS

System Acoustics

<37 dB

System Weight

91.0 lbs (43.1 kg)

System Dimensions

Height: 25.1 in (639 mm)

Width: 10.1 in (256 mm)

Length: 20.4 in (518 mm)

System Power Usage

1.5 kW at 100–120 Vac

Operating Temperature Range

5–35 ºC (41–95 ºF)

You May Also Like

Related products

-

NVIDIA DGX Station

SKU: DGXS-2511C+P2CMI00More Information- Four NVIDIA TESLA V100 GPU

- Next Generation NVIDIA NVLINK

- Water Cooling

- 1/20 Power CONSUMPTION

- Pre-installed standard Ubuntu 14.04 w/ Caffe, Torch, Theano, BIDMach, cuDNN v2, and CUDA 8.0

-

NVIDIA DGX B300

SKU: N/AMore Information- Built with NVIDIA Blackwell Ultra GPUs

- 2.3TB of GPU memory space

- 72 petaFLOPS of training performance

- 144 petaFLOPS of inference performance

- NVIDIA networking

- Intel® Xeon® 6776P Processors

- Foundation of NVIDIA DGX BasePOD™ and NVIDIA DGX SuperPOD™

- Leverages NVIDIA AI Enterprise and NVIDIA Mission Control software

-

NVIDIA HGX A100 (8-GPU)

SKU: N/AMore Information- 8X NVIDIA A100 GPUS WITH 320 GB TOTAL GPU MEMORY

- 6X NVIDIA NVSWITCHES

- 320 GB MEMORY

- 4.8 TB/s TOTAL AGGREGATE BANDWIDTH

Our Customers

Previous

Next