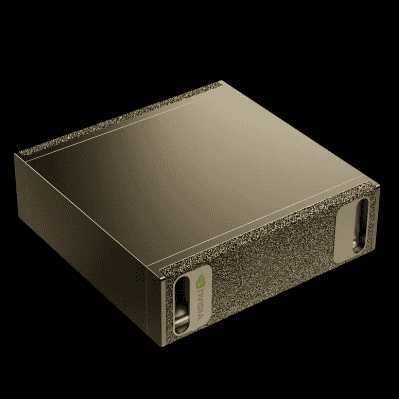

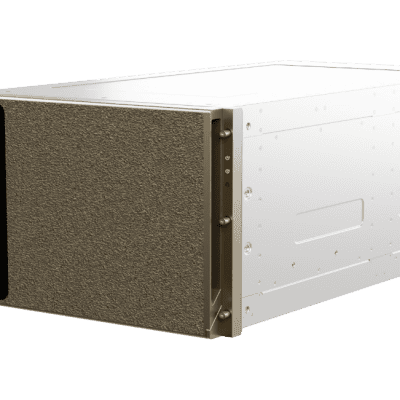

Designed for AI Reasoning Performance

The NVIDIA GB300 NVL72 features a fully liquid-cooled, rack-scale design that unifies 72 NVIDIA Blackwell Ultra GPUs and 36 Arm®-based NVIDIA Grace™ CPUs in a single platform optimized for test-time scaling inference. AI factories powered with the GB300 NVL72 using NVIDIA Quantum-X800 InfiniBand or Spectrum™-X Ethernet paired with ConnectX®-8 SuperNICS provide a 50x higher output for reasoning model inference compared to the NVIDIA Hopper™ platform.

NVIDIA GB300 NVL72

- AI Reasoning Inference

- 288 GB of HBM3e

- NVIDIA Blackwell Architecture

- NVIDIA ConnectX-8 SuperNIC

- NVIDIA Grace CPU

- Fifth-Generation NVIDIA NVLink

About

Specification

Specifications

Configuration

72 NVIDIA Blackwell Ultra GPUs, 36 NVIDIA Grace CPUs

NVLink Bandwidth

130 TB/s

Fast Memory

Up to 40 TB

GPU Memory | Bandwidth

Up to 21 TB | Up to 576 TB/s

CPU Memory | Bandwidth

Up to 18 TB SOCAMM with LPDDR5X | Up to 14.3 TB/s

CPU Core Count

2,592 Arm Neoverse V2 cores

FP4 Tensor Core

1,400 | 1,100² PFLOPS

FP8/FP6 Tensor Core

720 PFLOPS

INT8 Tensor Core

23 PFLOPS

FP16/BF16 Tensor Core

360 PFLOPS

TF32 Tensor Core

180 PFLOPS

FP32

6 PFLOPS

FP64 / FP64 Tensor Core

100 TFLOPS

You May Also Like

Related products

-

NVIDIA DGX Spark GB10

SKU: N/AMore Information- Built on NVIDIA GB10 Grace Blackwell Superchip

- NVIDIA Blackwell GPU with fifth-generation Tensor Core technology

- NVIDIA Grace CPU with 20- core high-performance Arm architecture

- Up to 1 petaFLOP of AI performance using FP4

- 128 GB of coherent, unified system memory

- Support for up to 200 billion parameter model

- NVIDIA ConnectX™ networking to link two systems to work with models up to 405 billion parameters

- Up to 4 TB of NVMe storage

- Compact desktop form factor

-

NVIDIA DGX H200

SKU: 900-2G133-0010-000-1-1More Information- 8x NVIDIA H200 GPUs with 1,128GBs of Total GPU Memory

- 4x NVIDIA NVSwitches™

- 10x NVIDIA ConnectX®-7 400Gb/s Network Interface

- Dual Intel Xeon Platinum 8480C processors

- 30TB NVMe SSD

-

NVIDIA DGX Rubin NVL8

SKU: N/AMore Information- 8x NVIDIA Rubin GPUs

- 2x Intel® Xeon® 6776P processors

- 2.3 TB GPU Memory

- NVFP4 Inference: 400 PF

- NVFP4 Training: 280 PF

- FP8/FP6 Training: 140 PF

- NVLink 28.8 TB/s total bandwidth

Our Customers

Previous

Next